Image Colorization Using Deep Convolutional Neural Networks

Our goal in this Deep Learning course project was to replicate the ECCV 2016 paper Convolutional Sketch Inversion that introduced a Deep convolution neural network (DNN) to generate photo-realistic images from face sketches. Image colorization is a very challenging problem, as a colored image of a person’s face contains important information like complexion and hair color of the person, while making a sketch we lose this information and are only left with high level recognizable features. We used Chainer- framework deep learning for the implementation of this network.

From total of 202,599 images of Large-scale CelebFaces Attributes (CelebA) dataset, we have only used 9000 images for training and 2989 images for validation. Only grayscale sketches were used for network training. After training, the network was tested on two sets of images, Set A is taken from CelebA dataset that was not part of the training process and Set B was taken from various online sources.

From total of 202,599 images of Large-scale CelebFaces Attributes (CelebA) dataset, we have only used 9000 images for training and 2989 images for validation. Only grayscale sketches were used for network training. After training, the network was tested on two sets of images, Set A is taken from CelebA dataset that was not part of the training process and Set B was taken from various online sources.

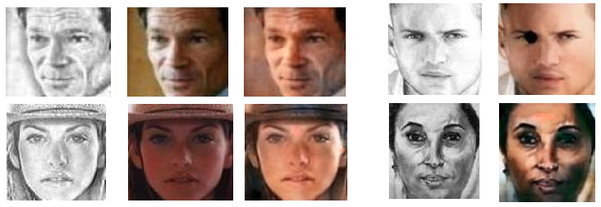

Results on Set A Results on Set B

Input Sketch Desired Image Synthesized image Input Sketch Synthesized image

As shown in the table above, synthesized images are easily recognizable and closely match the desired images. The results on Set B shows that our model can generate color images from online sketches as good as it can generate from sketches it has been trained on.

Examples Where Model Failed

Below are some examples selected from both sets where our model failed.

Examples Where Model Failed

Below are some examples selected from both sets where our model failed.

Results on Set A Results on Set B

Input Sketch Desired Image Synthesized image Input Sketch Synthesized image

The system fails when a sketch with more shading is used as an input; this is due to the fact that the training data we have used includes much more white sketches as compared to black ones. Therefore, our network is unable to learn lighting and shadowing effects.